The Partisan Effects of Social Media Bans

Evidence from Brazil’s X Ban

Georgetown University, McCourt School of Public Policy – 2026

Democratic Platform Bans

We know a lot about information control under authoritarianism (Roberts 2018; Pan and Siegel 2020; Boxell and Steinert-Threlkeld 2022) – but almost nothing about bans and escalation of platform government policies in democracies

However, democratic governments have increased efforts to regulate online platforms – sometimes conflicts around these policies escalate to full nation-wide bans:

- Ukraine blocked VKontakte to curb Russian propaganda (2017)

- India and Nepal have blocked TikTok and other platforms during political turmoil

- The US forced a sale of TikTok

- Brazil banned X nationwide for 39 days (Aug 30 – Oct 8, 2024)

Context: The X Ban in Brazil

After Bolsonaro’s 2022 defeat, right-leaning supporters contested the results – truck lockouts, encampments, and a strong digital campaign on social media

January 8, 2023: Bolsonaro supporters stormed Congress, the Supreme Court, and the presidential palace

Supreme Court opened judicial inquiries, requested removal of accounts spreading misinformation. Meta and Google complied. X refused.

August 2024: X’s legal representative in Brazil resigned; leadership refused to name a replacement

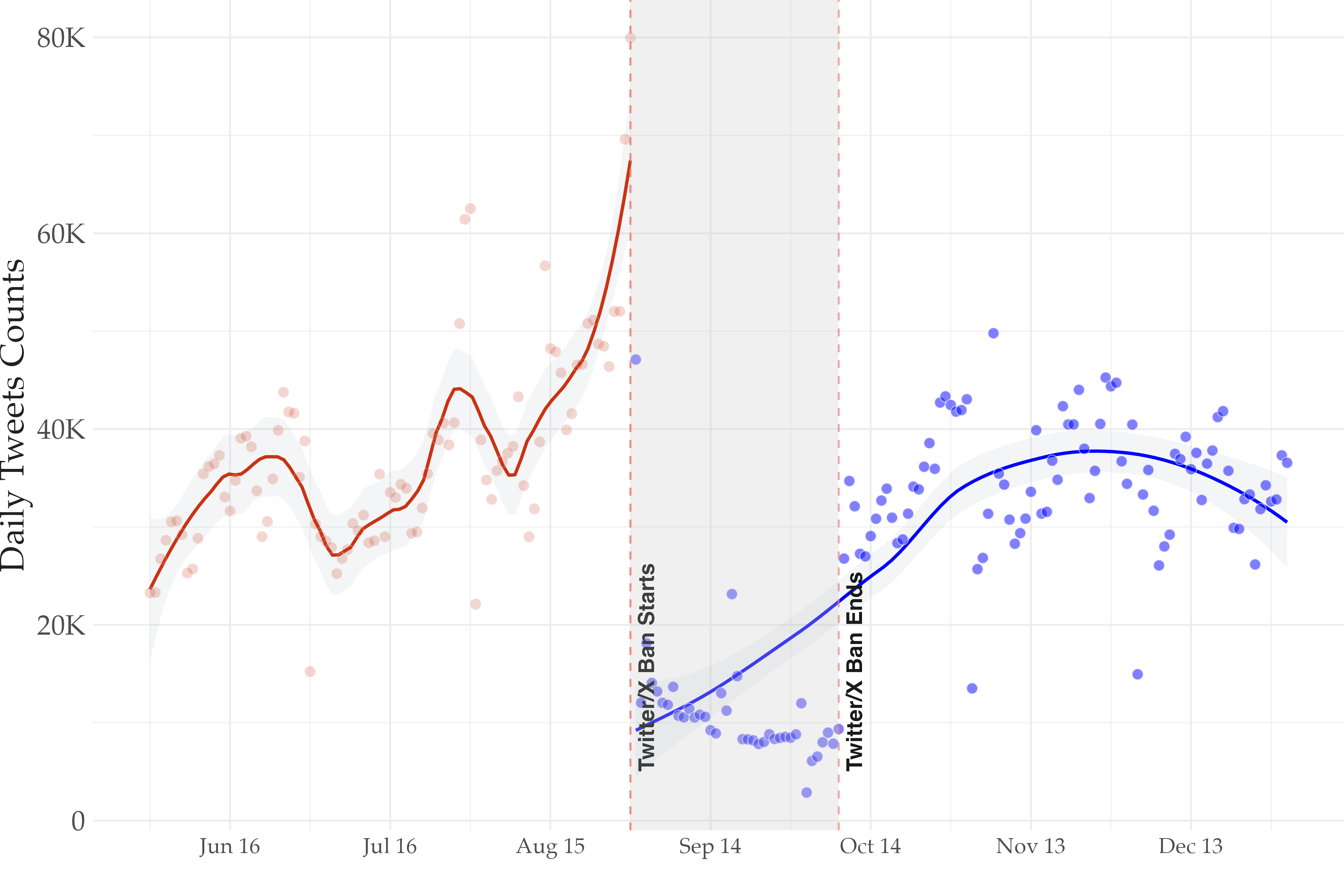

August 30: Supreme Court ordered a nationwide ban on X – affecting ~40 million users

October 8: Ban lifted after X complied with court demands (~39 days total)

How do partisan dynamics in polarized democracies shape compliance with platform bans

and what are the consequences for the information environment in the short and lon run?

A theory of partisan platform segmentation

When platform governance gets politicized, partisans face different incentives to exit or circumvent it. Exit or Circumvention depends on two distinct types incentives:

- Reputational incentives: Complying signals respect for institutional authority; circumventing signals defiance and partisan loyalty

- Informational incentives: If your co-partisans leave, the remaining content becomes less useful, lowering the payoff to staying

Ratchet effect: Co-partisans make similar exit/stay decisions, the platform sorts along partisan lines, and the effect doesn’t fully reverse even after the ban lifts. Particularly true as digital space becomes more fragmented with more platforms

Data & Design

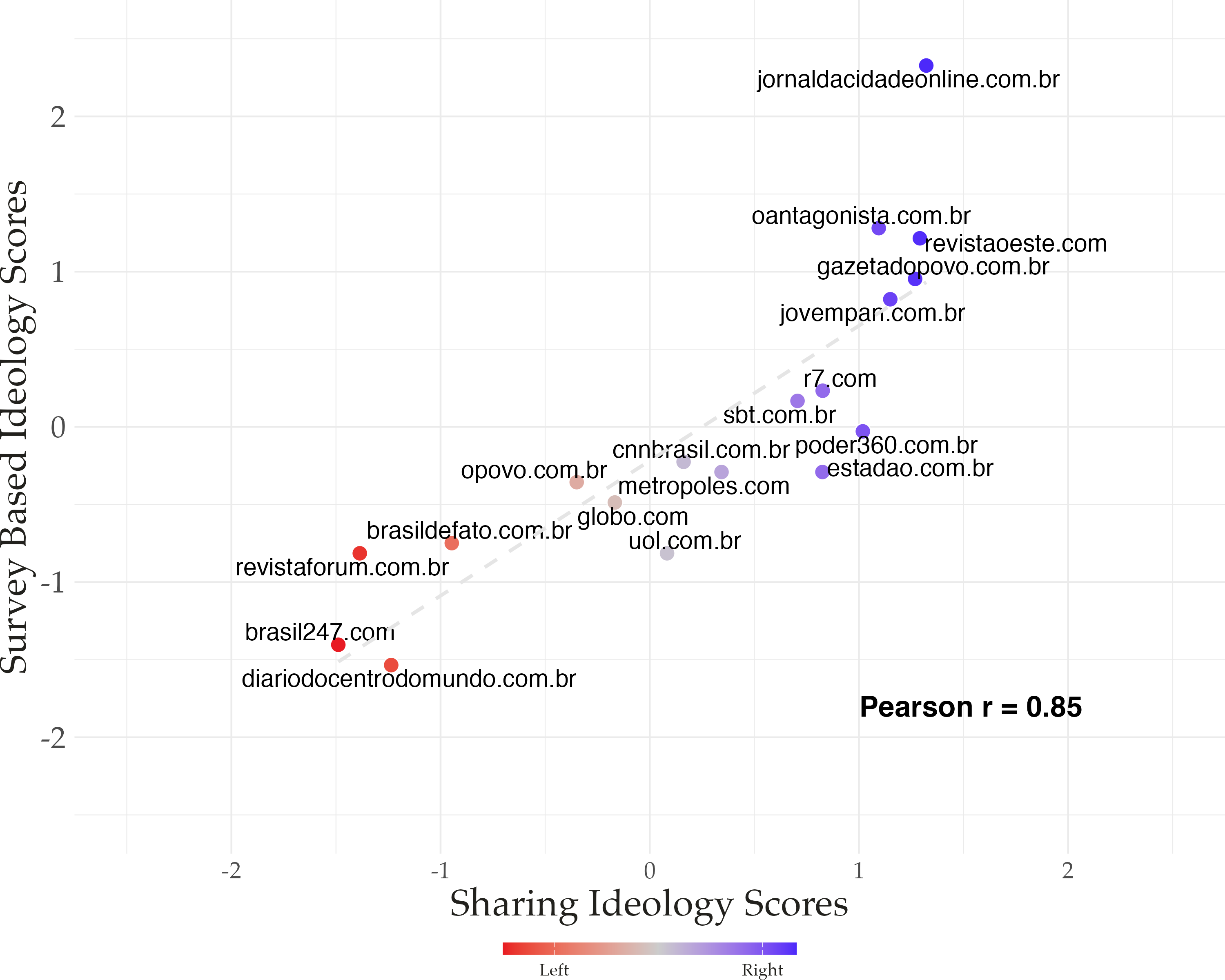

Pre-ban data: 14M tweets via the X Decahose to estimate ideology

Source: X Decahose API — a 10% real-time sample of all public tweets

- 90 days before the ban (June – August 2024)

- Filtered to Portuguese-language tweets containing a URL, ~14 million tweets total

- Feeds the user × news-domain matrix used for ideology recovery

- Domains: >100 total shares, shared by >10 distinct users

- Users: shared >5 distinct political news domains

- Use correspondence Analysis recovers ideology from news-sharing behavior (Eady et. al, 2024)

Panel data: 7,471 politically engaged users tracked across 7 months

Source: Full timelines scraped via public Nitter instances (Decahose access was lost mid-project)

- Sample frame: users from the pre-ban data who shared ≥5 distinct political news domains → 9,061 candidates

- Successfully collected: 7,471 of 9,061 (~18% attrition: account deletion, handle changes, suspension, private settings)

- Window: June 1 – December 31, 2024

- ~6.7M tweets, ~430K political news shares

- Non-random sample, but a small share of users generates most political content on the platform (Grinberg et al. 2019; Baribi-Bartov et al. 2024).

Results: Validation

Behavioral scores correlate at r = 0.85 with survey-based measures

Results

The ban cut posting and news sharing sharply – but not to zero

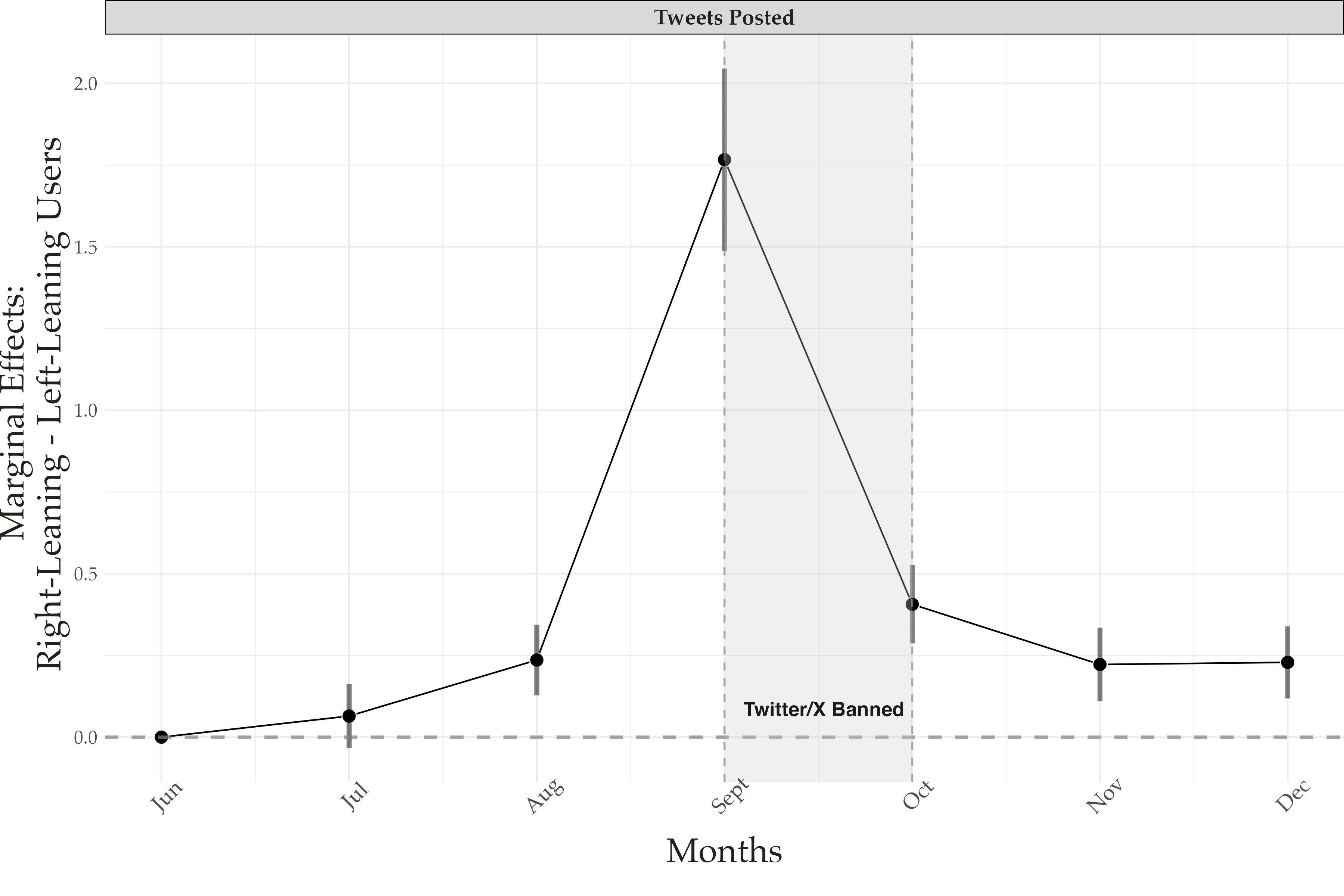

Partisan Effects

We identify the causal effects of the ban using a Poisson event-study model:

\[y_{ij} \sim \text{Poisson}(\lambda_{ij})\]

\[\lambda_{ij} = \exp\left(\alpha_i + \tau_j + \sum_{t=1}^{6} \beta_t \cdot \text{Right-leaning}_i \cdot \text{Month}_t\right)\]

Where:

- \(y_{ij}\) - Count of tweets by user \(i\) on day \(j\)

- \(\alpha_i\) - User fixed effects (absorbs baseline activity differences)

- \(\tau_j\) - Day fixed effects (absorbs platform-wide shocks)

- \(\text{Right-leaning}_i\) - Binary: ideology score > 0

- \(\text{Month}_t\) - Monthly indicators (June = baseline)

- \(\beta_t\) - Parameters of interest: how much more active right-leaning users are in month \(t\) relative to left-leaning users

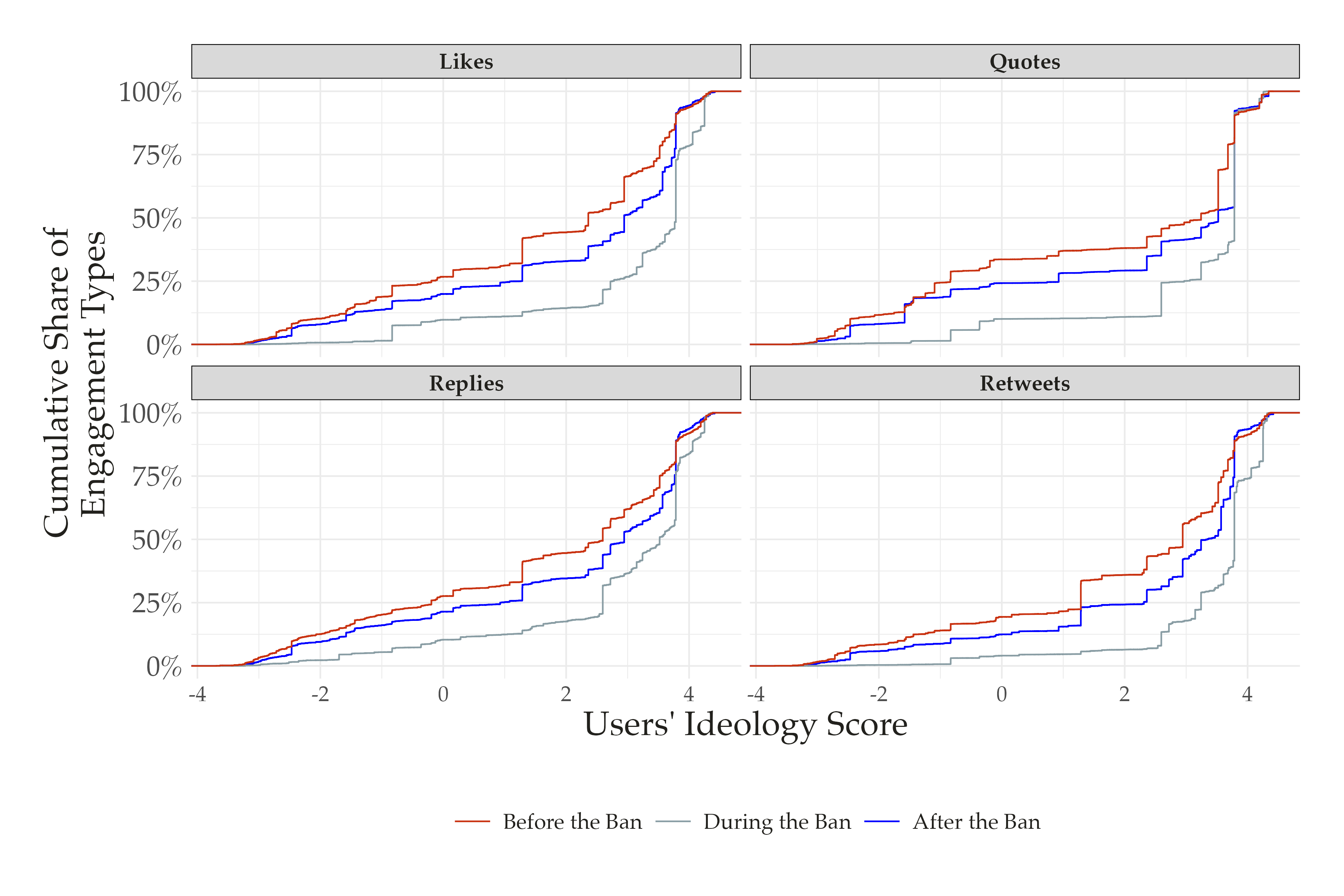

Right-leaning users were 5.8x more active during the ban

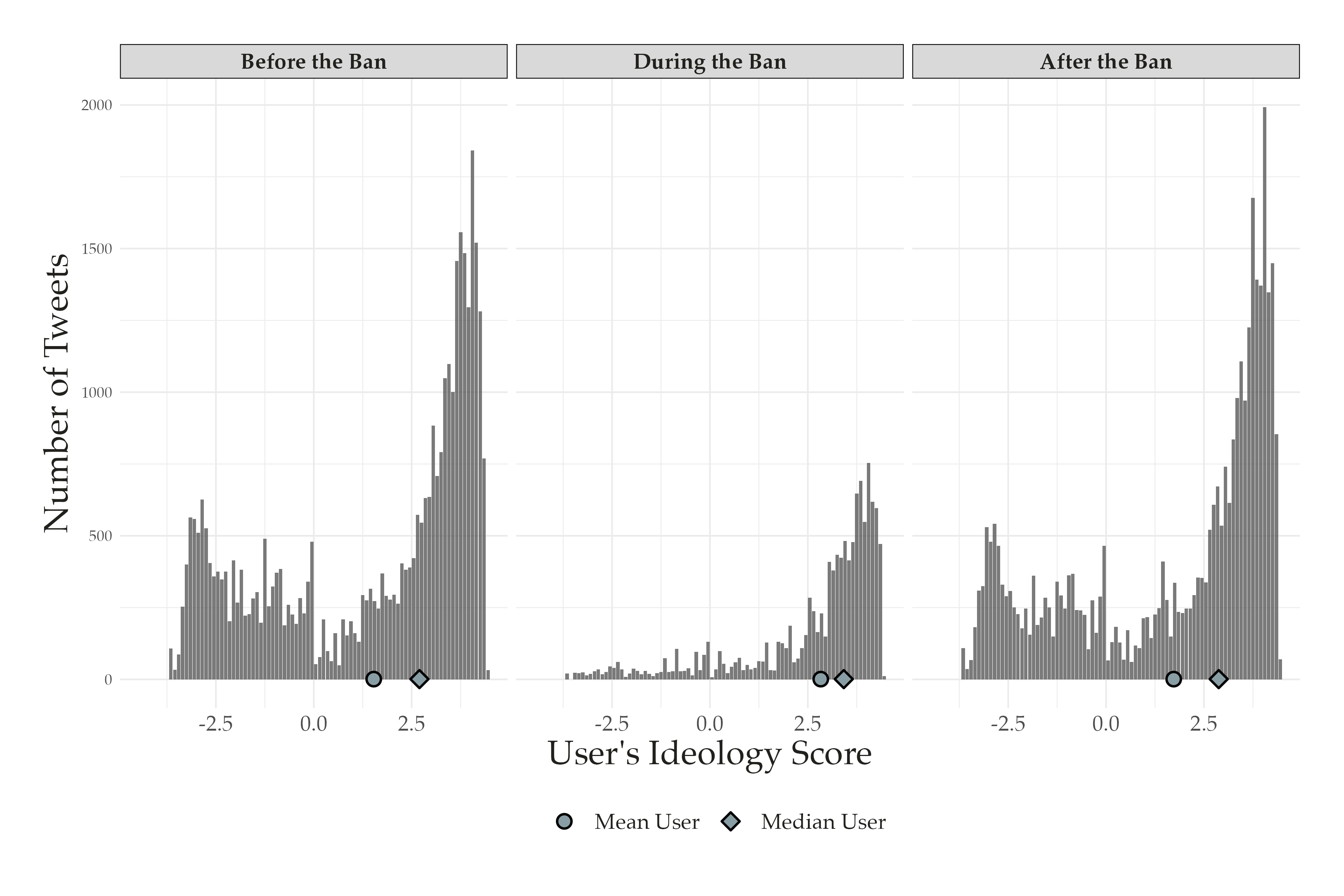

Right-leaning users produced the vast majority of content during and after the ban

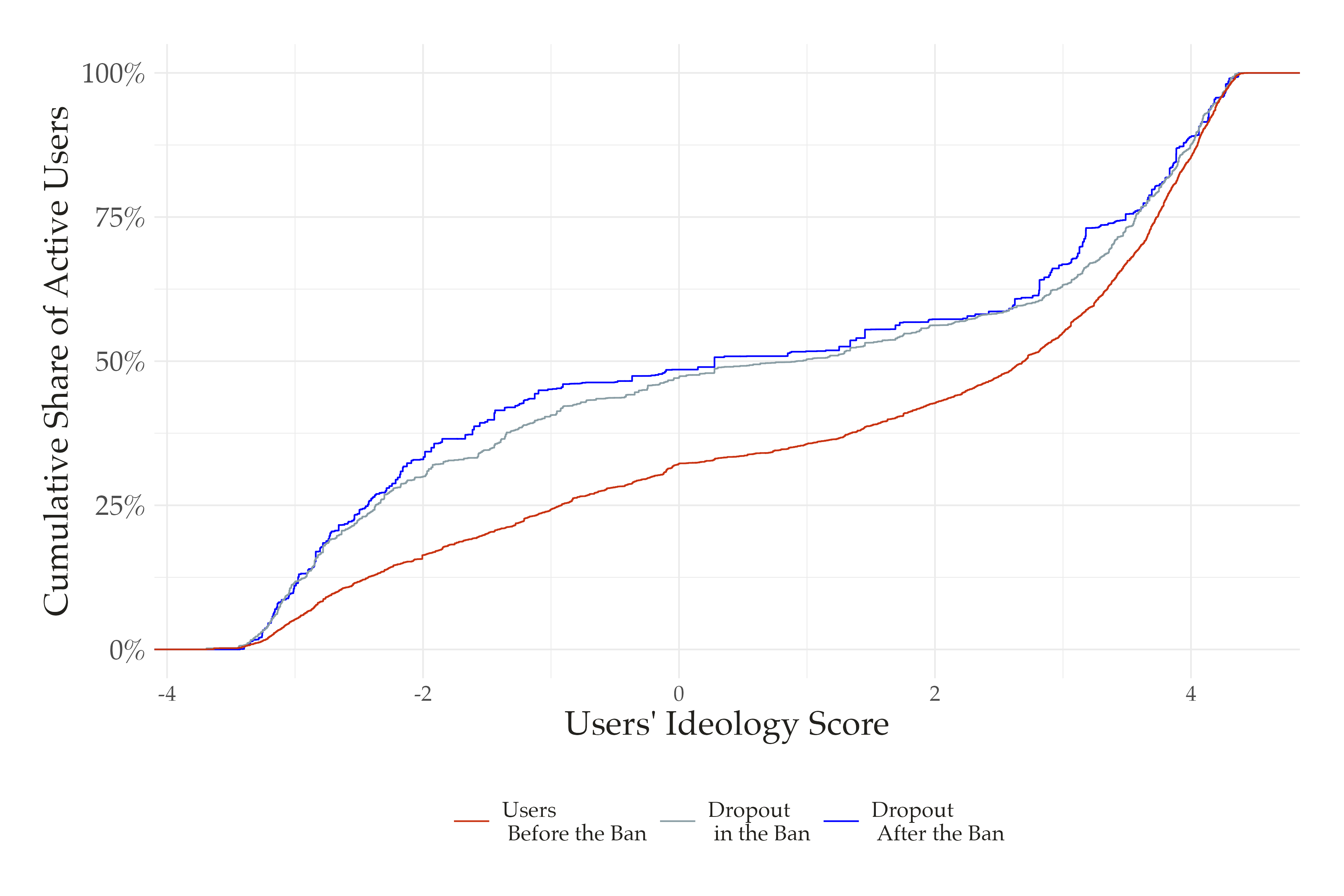

9% of politically engaged users never returned to X

Engagement became concentrated among right-leaning users – and stayed that way

Implications

Conflicts over platform regulation in polarized democracies can generate durable partisan shifts in participation

These changes are sticky. Three months after the ban lifted, X was still more conservative. 9% of users never came back.

The so-called Echo Chambers are not anymore a space in the platform user graph, but the platform entirely

Policy Trade-off: Measures to curb misinformation may produce unintended downstream effects in the digital ecosystem.

Thank you

tiago.ventura@georgetown.edu

CPAC WashU